Manifold learning12/20/2023 The resulting manifold is then tabulated and looked-up at run-time. For most engineering applications, the large scale separation between the flame (typically sub-millimeter scale) and the characteristic turbulent flow (typically centimeter or meter scale) allows us to evoke simplifying assumptions-such as done for the flamelet model-to pre-compute all the chemical reactions and map them to a low-order manifold. The computational challenges in turbulent combustion simulations stem from the physical complexities and multi-scale nature of the problem which make it intractable to compute scale-resolving simulations. We found that offline mining can generate a better statistical representation of the population by working on the whole dataset. As well, we discuss the relations of different colorectal tissue types in terms of extreme distances. We also investigate online approaches based on extreme distance and comprehensively compare the performance of offline and online mining based on the data patterns and explain offline mining as a tractable generalization of the online mining with large mini-batch size. We analyze the impacts of extreme cases for offline versus online mining, including easy positive, batch semi-hard, and batch hard triplet mining as well as the neighborhood component analysis loss, its proxy version, and distance weighted sampling.

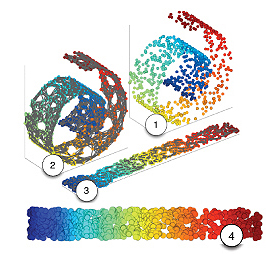

While many works focus solely on how to select the triplets online (batch-wise), we also study the effect of extreme distances and neighbor patches before training in an offline fashion. We consider the extreme, i.e., farthest and nearest patches with respect to a given anchor, both in online and offline mining. We analyze the effect of offline and online triplet mining for colorectal cancer (CRC) histopathology dataset containing 100,000 patches. Our simulations show that the proposed GLLE methods work effectively in unfolding and generating submanifolds of data. The proposed GLLE methods are closely related to and inspired by variational inference, factor analysis, and probabilistic principal component analysis. We propose two versions for stochastic linear reconstruction, one using expectation maximization and another with direct sampling from a derived distribution by optimization. The proposed GLLE algorithms can generate various LLE embeddings stochastically while all the generated embeddings relate to the original LLE embedding. GLLE assumes that every data point is caused by its linear reconstruction weights as latent factors. In this work, we propose two novel generative versions of LLE, named Generative LLE (GLLE), whose linear reconstruction steps are stochastic rather than deterministic. It has two main steps which are linear reconstruc- tion and linear embedding of points in the input space and embedding space, respectively.

Locally Linear Embedding (LLE) is a nonlin- ear spectral dimensionality reduction and manifold learning method.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed